江阴高端网站建设济宁做网站的电话

作业:day43的时候我们安排大家对自己找的数据集用简单cnn训练,现在可以尝试下借助这几天的知识来实现精度的进一步提高

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np

import os

import time

from torchvision import models# 设置中文字体支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题# 检查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")# 定义通道注意力

class ChannelAttention(nn.Module):def __init__(self, in_channels, ratio=16):super().__init__()self.avg_pool = nn.AdaptiveAvgPool2d(1)self.max_pool = nn.AdaptiveMaxPool2d(1)self.fc = nn.Sequential(nn.Linear(in_channels, in_channels // ratio, bias=False),nn.ReLU(),nn.Linear(in_channels // ratio, in_channels, bias=False))self.sigmoid = nn.Sigmoid()def forward(self, x):b, c, h, w = x.shapeavg_out = self.fc(self.avg_pool(x).view(b, c))max_out = self.fc(self.max_pool(x).view(b, c))attention = self.sigmoid(avg_out + max_out).view(b, c, 1, 1)return x * attention## 空间注意力模块

class SpatialAttention(nn.Module):def __init__(self, kernel_size=7):super().__init__()self.conv = nn.Conv2d(2, 1, kernel_size, padding=kernel_size//2, bias=False)self.sigmoid = nn.Sigmoid()def forward(self, x):avg_out = torch.mean(x, dim=1, keepdim=True)max_out, _ = torch.max(x, dim=1, keepdim=True)pool_out = torch.cat([avg_out, max_out], dim=1)attention = self.conv(pool_out)return x * self.sigmoid(attention)## CBAM模块

class CBAM(nn.Module):def __init__(self, in_channels, ratio=16, kernel_size=7):super().__init__()self.channel_attn = ChannelAttention(in_channels, ratio)self.spatial_attn = SpatialAttention(kernel_size)def forward(self, x):x = self.channel_attn(x)x = self.spatial_attn(x)return x# 自定义ResNet18模型,插入CBAM模块

class ResNet18_CBAM(nn.Module):def __init__(self, num_classes=10, pretrained=True, cbam_ratio=16, cbam_kernel=7):super().__init__()# 加载预训练ResNet18self.backbone = models.resnet18(pretrained=pretrained) # 修改首层卷积以适应32x32输入self.backbone.conv1 = nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False)self.backbone.maxpool = nn.Identity() # 移除原始MaxPool层# 在每个残差块组后添加CBAM模块self.cbam_layer1 = CBAM(in_channels=64, ratio=cbam_ratio, kernel_size=cbam_kernel)self.cbam_layer2 = CBAM(in_channels=128, ratio=cbam_ratio, kernel_size=cbam_kernel)self.cbam_layer3 = CBAM(in_channels=256, ratio=cbam_ratio, kernel_size=cbam_kernel)self.cbam_layer4 = CBAM(in_channels=512, ratio=cbam_ratio, kernel_size=cbam_kernel)# 修改分类头self.backbone.fc = nn.Linear(in_features=512, out_features=num_classes)def forward(self, x):x = self.backbone.conv1(x)x = self.backbone.bn1(x)x = self.backbone.relu(x) # [B, 64, 32, 32]# 第一层残差块 + CBAMx = self.backbone.layer1(x) # [B, 64, 32, 32]x = self.cbam_layer1(x)# 第二层残差块 + CBAMx = self.backbone.layer2(x) # [B, 128, 16, 16]x = self.cbam_layer2(x)# 第三层残差块 + CBAMx = self.backbone.layer3(x) # [B, 256, 8, 8]x = self.cbam_layer3(x)# 第四层残差块 + CBAMx = self.backbone.layer4(x) # [B, 512, 4, 4]x = self.cbam_layer4(x)# 全局平均池化 + 分类x = self.backbone.avgpool(x) # [B, 512, 1, 1]x = torch.flatten(x, 1) # [B, 512]x = self.backbone.fc(x) # [B, num_classes]return x# ==================== 数据加载修改部分 ====================

# 数据集路径

train_data_dir = 'archive/Train_Test_Valid/Train'

test_data_dir = 'archive/Train_Test_Valid/test'# 获取类别数量

num_classes = len(os.listdir(train_data_dir))

print(f"检测到 {num_classes} 个类别")# 数据预处理

train_transform = transforms.Compose([transforms.RandomResizedCrop(32),transforms.RandomHorizontalFlip(),transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.1),transforms.RandomRotation(15),transforms.ToTensor(),transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])test_transform = transforms.Compose([transforms.Resize(32),transforms.CenterCrop(32),transforms.ToTensor(),transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])# 加载数据集

train_dataset = datasets.ImageFolder(root=train_data_dir, transform=train_transform)

test_dataset = datasets.ImageFolder(root=test_data_dir, transform=test_transform)train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True, num_workers=4)

test_loader = DataLoader(test_dataset, batch_size=64, shuffle=False, num_workers=4)print(f"训练集大小: {len(train_dataset)} 张图片")

print(f"测试集大小: {len(test_dataset)} 张图片")# ==================== 训练函数 ====================

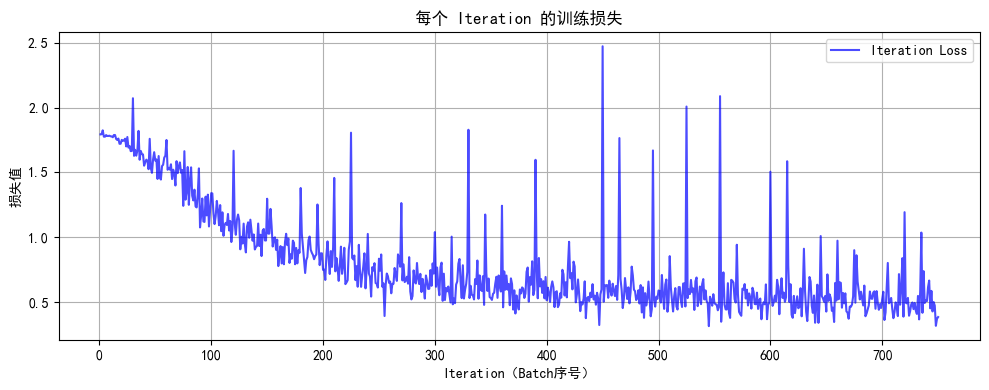

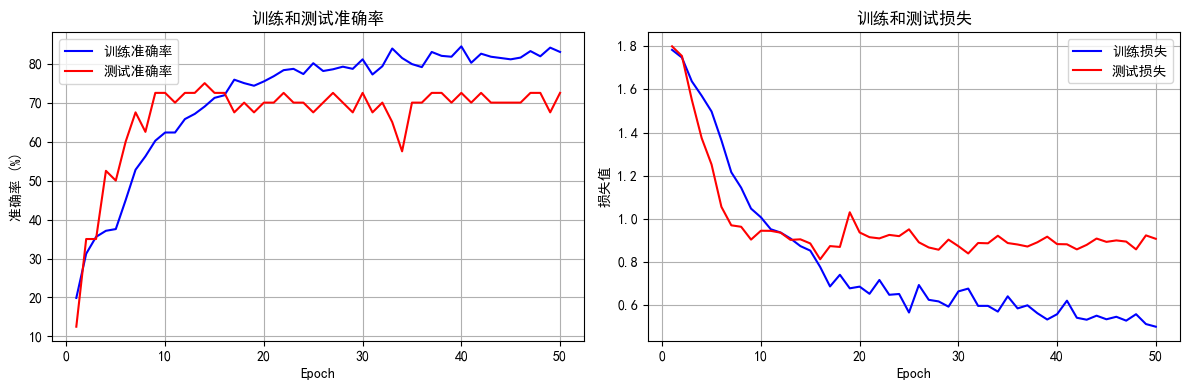

def set_trainable_layers(model, trainable_parts):print(f"\n---> 解冻以下部分并设为可训练: {trainable_parts}")for name, param in model.named_parameters():param.requires_grad = Falsefor part in trainable_parts:if part in name:param.requires_grad = Truebreakdef train_staged_finetuning(model, criterion, train_loader, test_loader, device, epochs):optimizer = Noneall_iter_losses, iter_indices = [], []train_acc_history, test_acc_history = [], []train_loss_history, test_loss_history = [], []for epoch in range(1, epochs + 1):epoch_start_time = time.time()# 动态调整学习率和冻结层if epoch == 1:print("\n" + "="*50 + "\n🚀 **阶段 1:训练注意力模块和分类头**\n" + "="*50)set_trainable_layers(model, ["cbam", "backbone.fc"])optimizer = optim.Adam(filter(lambda p: p.requires_grad, model.parameters()), lr=1e-3)elif epoch == 6:print("\n" + "="*50 + "\n✈️ **阶段 2:解冻高层卷积层 (layer3, layer4)**\n" + "="*50)set_trainable_layers(model, ["cbam", "backbone.fc", "backbone.layer3", "backbone.layer4"])optimizer = optim.Adam(filter(lambda p: p.requires_grad, model.parameters()), lr=1e-4)elif epoch == 21:print("\n" + "="*50 + "\n🛰️ **阶段 3:解冻所有层,进行全局微调**\n" + "="*50)for param in model.parameters(): param.requires_grad = Trueoptimizer = optim.Adam(model.parameters(), lr=1e-5)# 训练循环model.train()running_loss, correct, total = 0.0, 0, 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device)optimizer.zero_grad()output = model(data)loss = criterion(output, target)loss.backward()optimizer.step()iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append((epoch - 1) * len(train_loader) + batch_idx + 1)running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 单Batch损失: {iter_loss:.4f} | 累计平均损失: {running_loss/(batch_idx+1):.4f}')epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / totaltrain_loss_history.append(epoch_train_loss)train_acc_history.append(epoch_train_acc)# 测试循环model.eval()test_loss, correct_test, total_test = 0, 0, 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_testtest_loss_history.append(epoch_test_loss)test_acc_history.append(epoch_test_acc)print(f'Epoch {epoch}/{epochs} 完成 | 耗时: {time.time() - epoch_start_time:.2f}s | 训练准确率: {epoch_train_acc:.2f}% | 测试准确率: {epoch_test_acc:.2f}%')# 绘图函数def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序号)')plt.ylabel('损失值')plt.title('每个 Iteration 的训练损失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()def plot_epoch_metrics(train_acc, test_acc, train_loss, test_loss):epochs = range(1, len(train_acc) + 1)plt.figure(figsize=(12, 4))plt.subplot(1, 2, 1)plt.plot(epochs, train_acc, 'b-', label='训练准确率')plt.plot(epochs, test_acc, 'r-', label='测试准确率')plt.xlabel('Epoch')plt.ylabel('准确率 (%)')plt.title('训练和测试准确率')plt.legend(); plt.grid(True)plt.subplot(1, 2, 2)plt.plot(epochs, train_loss, 'b-', label='训练损失')plt.plot(epochs, test_loss, 'r-', label='测试损失')plt.xlabel('Epoch')plt.ylabel('损失值')plt.title('训练和测试损失')plt.legend(); plt.grid(True)plt.tight_layout()plt.show()print("\n训练完成! 开始绘制结果图表...")plot_iter_losses(all_iter_losses, iter_indices)plot_epoch_metrics(train_acc_history, test_acc_history, train_loss_history, test_loss_history)return epoch_test_acc# ==================== 主程序 ====================

model = ResNet18_CBAM(num_classes=num_classes).to(device)

criterion = nn.CrossEntropyLoss()

epochs = 50print("开始使用带分阶段微调策略的ResNet18+CBAM模型进行训练...")

final_accuracy = train_staged_finetuning(model, criterion, train_loader, test_loader, device, epochs)

print(f"训练完成!最终测试准确率: {final_accuracy:.2f}%")# 保存模型

torch.save(model.state_dict(), 'resnet18_cbam_custom.pth')

print("模型已保存为: resnet18_cbam_custom.pth")使用设备: cpu

检测到 6 个类别

训练集大小: 900 张图片

测试集大小: 40 张图片

d:\anaconda\Lib\site-packages\torchvision\models\_utils.py:208: UserWarning: The parameter 'pretrained' is deprecated since 0.13 and may be removed in the future, please use 'weights' instead.

warnings.warn(

d:\anaconda\Lib\site-packages\torchvision\models\_utils.py:223: UserWarning: Arguments other than a weight enum or `None` for 'weights' are deprecated since 0.13 and may be removed in the future. The current behavior is equivalent to passing `weights=ResNet18_Weights.IMAGENET1K_V1`. You can also use `weights=ResNet18_Weights.DEFAULT` to get the most up-to-date weights.

warnings.warn(msg)

开始使用带分阶段微调策略的ResNet18+CBAM模型进行训练...

==================================================

🚀 **阶段 1:训练注意力模块和分类头**

==================================================

---> 解冻以下部分并设为可训练: ['cbam', 'backbone.fc']

Epoch 1/50 完成 | 耗时: 17.97s | 训练准确率: 19.89% | 测试准确率: 12.50%

Epoch 2/50 完成 | 耗时: 16.93s | 训练准确率: 31.22% | 测试准确率: 35.00%

Epoch 3/50 完成 | 耗时: 17.15s | 训练准确率: 35.56% | 测试准确率: 35.00%

Epoch 4/50 完成 | 耗时: 17.36s | 训练准确率: 37.11% | 测试准确率: 52.50%

Epoch 5/50 完成 | 耗时: 17.60s | 训练准确率: 37.56% | 测试准确率: 50.00%

==================================================

✈️ **阶段 2:解冻高层卷积层 (layer3, layer4)**

==================================================

---> 解冻以下部分并设为可训练: ['cbam', 'backbone.fc', 'backbone.layer3', 'backbone.layer4']

Epoch 6/50 完成 | 耗时: 19.17s | 训练准确率: 45.00% | 测试准确率: 60.00%

Epoch 7/50 完成 | 耗时: 19.26s | 训练准确率: 52.78% | 测试准确率: 67.50%

Epoch 8/50 完成 | 耗时: 19.21s | 训练准确率: 56.22% | 测试准确率: 62.50%

Epoch 9/50 完成 | 耗时: 19.33s | 训练准确率: 60.22% | 测试准确率: 72.50%

Epoch 10/50 完成 | 耗时: 19.32s | 训练准确率: 62.33% | 测试准确率: 72.50%

Epoch 11/50 完成 | 耗时: 19.34s | 训练准确率: 62.33% | 测试准确率: 70.00%

Epoch 12/50 完成 | 耗时: 29.18s | 训练准确率: 65.78% | 测试准确率: 72.50%

Epoch 13/50 完成 | 耗时: 37.93s | 训练准确率: 67.11% | 测试准确率: 72.50%

Epoch 14/50 完成 | 耗时: 36.39s | 训练准确率: 69.00% | 测试准确率: 75.00%

Epoch 15/50 完成 | 耗时: 37.31s | 训练准确率: 71.22% | 测试准确率: 72.50%

Epoch 16/50 完成 | 耗时: 36.51s | 训练准确率: 71.89% | 测试准确率: 72.50%

Epoch 17/50 完成 | 耗时: 24.59s | 训练准确率: 75.89% | 测试准确率: 67.50%

Epoch 18/50 完成 | 耗时: 36.65s | 训练准确率: 75.00% | 测试准确率: 70.00%

Epoch 19/50 完成 | 耗时: 20.12s | 训练准确率: 74.33% | 测试准确率: 67.50%

Epoch 20/50 完成 | 耗时: 19.23s | 训练准确率: 75.44% | 测试准确率: 70.00%

==================================================

🛰️ **阶段 3:解冻所有层,进行全局微调**

==================================================

Epoch 21/50 完成 | 耗时: 22.10s | 训练准确率: 76.78% | 测试准确率: 70.00%

Epoch 22/50 完成 | 耗时: 22.77s | 训练准确率: 78.33% | 测试准确率: 72.50%

Epoch 23/50 完成 | 耗时: 22.42s | 训练准确率: 78.67% | 测试准确率: 70.00%

Epoch 24/50 完成 | 耗时: 38.86s | 训练准确率: 77.33% | 测试准确率: 70.00%

Epoch 25/50 完成 | 耗时: 33.47s | 训练准确率: 80.11% | 测试准确率: 67.50%

Epoch 26/50 完成 | 耗时: 44.94s | 训练准确率: 78.11% | 测试准确率: 70.00%

Epoch 27/50 完成 | 耗时: 45.14s | 训练准确率: 78.56% | 测试准确率: 72.50%

Epoch 28/50 完成 | 耗时: 43.69s | 训练准确率: 79.22% | 测试准确率: 70.00%

Epoch 29/50 完成 | 耗时: 22.11s | 训练准确率: 78.67% | 测试准确率: 67.50%

Epoch 30/50 完成 | 耗时: 23.66s | 训练准确率: 81.11% | 测试准确率: 72.50%

Epoch 31/50 完成 | 耗时: 21.64s | 训练准确率: 77.22% | 测试准确率: 67.50%

Epoch 32/50 完成 | 耗时: 21.86s | 训练准确率: 79.33% | 测试准确率: 70.00%

Epoch 33/50 完成 | 耗时: 21.48s | 训练准确率: 83.89% | 测试准确率: 65.00%

Epoch 34/50 完成 | 耗时: 23.06s | 训练准确率: 81.44% | 测试准确率: 57.50%

Epoch 35/50 完成 | 耗时: 239.58s | 训练准确率: 79.89% | 测试准确率: 70.00%

Epoch 36/50 完成 | 耗时: 35.76s | 训练准确率: 79.11% | 测试准确率: 70.00%

Epoch 37/50 完成 | 耗时: 22.56s | 训练准确率: 83.00% | 测试准确率: 72.50%

Epoch 38/50 完成 | 耗时: 22.46s | 训练准确率: 82.00% | 测试准确率: 72.50%

Epoch 39/50 完成 | 耗时: 22.68s | 训练准确率: 81.78% | 测试准确率: 70.00%

Epoch 40/50 完成 | 耗时: 22.62s | 训练准确率: 84.44% | 测试准确率: 72.50%

Epoch 41/50 完成 | 耗时: 22.75s | 训练准确率: 80.22% | 测试准确率: 70.00%

Epoch 42/50 完成 | 耗时: 23.17s | 训练准确率: 82.56% | 测试准确率: 72.50%

Epoch 43/50 完成 | 耗时: 23.40s | 训练准确率: 81.78% | 测试准确率: 70.00%

Epoch 44/50 完成 | 耗时: 23.70s | 训练准确率: 81.44% | 测试准确率: 70.00%

Epoch 45/50 完成 | 耗时: 23.79s | 训练准确率: 81.11% | 测试准确率: 70.00%

Epoch 46/50 完成 | 耗时: 23.40s | 训练准确率: 81.56% | 测试准确率: 70.00%

Epoch 47/50 完成 | 耗时: 22.86s | 训练准确率: 83.22% | 测试准确率: 72.50%

Epoch 48/50 完成 | 耗时: 23.04s | 训练准确率: 81.89% | 测试准确率: 72.50%

Epoch 49/50 完成 | 耗时: 22.77s | 训练准确率: 84.11% | 测试准确率: 67.50%

Epoch 50/50 完成 | 耗时: 22.83s | 训练准确率: 83.00% | 测试准确率: 72.50%

训练完成! 开始绘制结果图表...

@浙大疏锦行

@浙大疏锦行